Note:

This topic has been translated from a Chinese forum by GPT and might contain errors.

Original topic: DM写入tidb异常,TIDB延迟较大如何排查。

TiDB version: 5.0.6

DM version: 5.3.0

Background:

Data is synchronized to TiDB through DM, and an abnormal write occurs. The delay screenshot on the dashboard page is shown. What information can be used to troubleshoot the cause of the delay?

Check the slow log to see if there are any slow queries. Alternatively, check the cluster’s resource usage.

You need to confirm whether it is DM performance or TiDB performance that is insufficient. Start by troubleshooting this.

If you have set up Prometheus monitoring, it is easy to see IO-related performance metrics.

The latency is indeed quite high. How is the hardware configuration?

Configuration:

CPU: 40 cores

Memory: 128GB

Disk: NVMe

Network: 10 Gigabit

Below is the information obtained from the current investigation:

- During abnormal delays in DM, there were issues with write-write conflicts. The cluster mode uses pessimistic locking. Even after attempting to shut down DM, the delay still occurred.

- During the abnormal period, the error log showed “[gc worker] delete range failed on range,” which did not appear during the normal write phase.

tidb-pro-TiDB_2022-08-31T06_22_46.275Z.json (3.7 MB)

I feel that there might be an issue with TiDB, causing data to be unable to be written during this period. No abnormal issues have been found on the IO side. Is there any way to see the abnormal situations causing the delay?

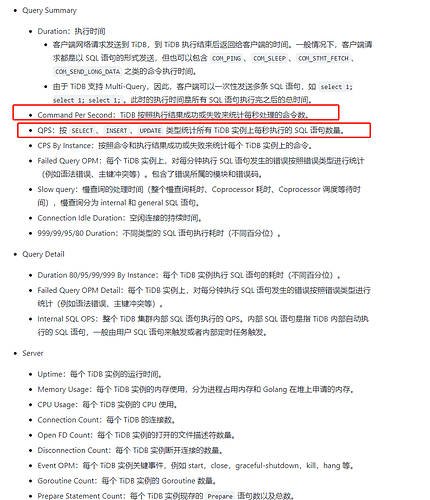

Check the status metrics of all TiDB nodes’ requests and processing through Prometheus.

Picked up a lifeline. According to the above prompt, I found that there was a TiDB instance with no KV Transaction OPS value when the latency was high. After removing this machine, the latency issue did not reappear. The initial judgment is that the network card of the machine is faulty.

Thank you, expert~

Network card issue. Traffic monitoring should be able to detect it.

This topic was automatically closed 1 minute after the last reply. No new replies are allowed.