Note:

This topic has been translated from a Chinese forum by GPT and might contain errors.

Original topic: lightning

[TiDB Usage Environment] Production Environment / Testing / Poc

[TiDB Version]

[Reproduction Path] What operations were performed when the issue occurred

[Encountered Issue: Issue Phenomenon and Impact]

[Resource Configuration]

[Attachment: Screenshot/Log/Monitoring]

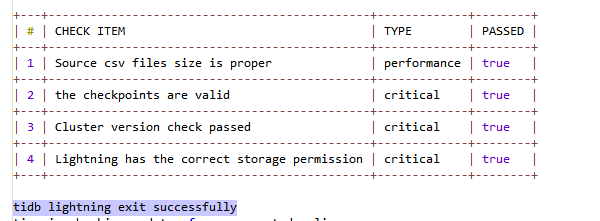

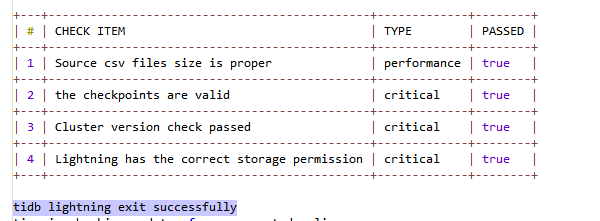

The tidb lightning exit successfully log shows success, and there are no errors in all logs, but there is a significant difference in the data volume. What could be the reason?

Please post the configuration file for us to take a look.

How is there a significant difference? When importing from the source cluster to the target cluster, is the target cluster having more or less data? Are there any other services writing or deleting data on the source cluster?

The target was reduced. From over 300 million data entries to 3 million, it succeeded and automatically stopped. The log just printed “success.”

Try importing another table and see if it works. What version are you using? I used dumpling+light for migration and haven’t encountered similar issues.

I have the same setup as you, just dumpling+light with the same configuration for other tables. Could it be due to memory issues?

It is estimated that the schema_pattern is written incorrectly.

Which version? The new version uses file-pattern.

You can check the lightning logs to see if any files have been filtered. Alternatively, check if there are any error messages.

When importing data, some data may be filtered out or not correctly imported due to incorrect filtering conditions or configuration issues. Please check the filtering conditions and configuration files before and after the import to ensure that all necessary data is correctly imported.