Note:

This topic has been translated from a Chinese forum by GPT and might contain errors.Original topic: 在单机上模拟部署生产环境集群报错:ssh_stderr: Failed to enable unit: Unit file pd-2379.service does not exist

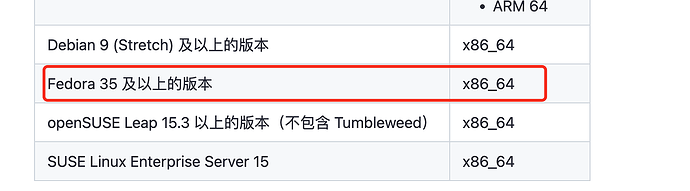

OS: Fedora Linux 38 (Workstation Edition) x86_64

Kernel: 6.3.7-200.fc38.x86_64

$ tiup cluster deploy tidb_local v7.1.0 ./topo.yaml --user root -p

tiup is checking updates for component cluster ...

Starting component `cluster`: /home/user/.tiup/components/cluster/v1.12.3/tiup-cluster deploy tidb_local v7.1.0 ./topo.yaml --user root -p

Input SSH password:

+ Detect CPU Arch Name

- Detecting node 172.21.117.108 Arch info ... Done

+ Detect CPU OS Name

- Detecting node 172.21.117.108 OS info ... Done

Please confirm your topology:

Cluster type: tidb

Cluster name: tidb_local

Cluster version: v7.1.0

Role Host Ports OS/Arch Directories

---- ---- ----- ------- -----------

pd 172.21.117.108 2379/2380 linux/x86_64 /data/tidb/deploy/pd-2379,/data/tidb/data/pd-2379

tikv 172.21.117.108 20160/20180 linux/x86_64 /data/tidb/deploy/tikv-20160,/data/tidb/data/tikv-20160

tikv 172.21.117.108 20161/20181 linux/x86_64 /data/tidb/deploy/tikv-20161,/data/tidb/data/tikv-20161

tikv 172.21.117.108 20162/20182 linux/x86_64 /data/tidb/deploy/tikv-20162,/data/tidb/data/tikv-20162

tidb 172.21.117.108 4000/10080 linux/x86_64 /data/tidb/deploy/tidb-4000

tiflash 172.21.117.108 9000/8123/3930/20170/20292/8234 linux/x86_64 /data/tidb/deploy/tiflash-9000,/data/tidb/data/tiflash-9000

prometheus 172.21.117.108 9090/12020 linux/x86_64 /data/tidb/deploy/prometheus-9090,/data/tidb/data/prometheus-9090

grafana 172.21.117.108 3000 linux/x86_64 /data/tidb/deploy/grafana-3000

Attention:

1. If the topology is not what you expected, check your yaml file.

2. Please confirm there is no port/directory conflicts in same host.

Do you want to continue? [y/N]: (default=N) y

+ Generate SSH keys ... Done

+ Download TiDB components

- Download pd:v7.1.0 (linux/amd64) ... Done

- Download tikv:v7.1.0 (linux/amd64) ... Done

- Download tidb:v7.1.0 (linux/amd64) ... Done

- Download tiflash:v7.1.0 (linux/amd64) ... Done

- Download prometheus:v7.1.0 (linux/amd64) ... Done

- Download grafana:v7.1.0 (linux/amd64) ... Done

- Download node_exporter: (linux/amd64) ... Done

- Download blackbox_exporter: (linux/amd64) ... Done

+ Initialize target host environments

- Prepare 172.21.117.108:22 ... Done

+ Deploy TiDB instance

- Copy pd -> 172.21.117.108 ... Done

- Copy tikv -> 172.21.117.108 ... Done

- Copy tikv -> 172.21.117.108 ... Done

- Copy tikv -> 172.21.117.108 ... Done

- Copy tidb -> 172.21.117.108 ... Done

- Copy tiflash -> 172.21.117.108 ... Done

- Copy prometheus -> 172.21.117.108 ... Done

- Copy grafana -> 172.21.117.108 ... Done

- Deploy node_exporter -> 172.21.117.108 ... Done

- Deploy blackbox_exporter -> 172.21.117.108 ... Done

+ Copy certificate to remote host

+ Init instance configs

- Generate config pd -> 172.21.117.108:2379 ... Done

- Generate config tikv -> 172.21.117.108:20160 ... Done

- Generate config tikv -> 172.21.117.108:20161 ... Done

- Generate config tikv -> 172.21.117.108:20162 ... Done

- Generate config tidb -> 172.21.117.108:4000 ... Done

- Generate config tiflash -> 172.21.117.108:9000 ... Done

- Generate config prometheus -> 172.21.117.108:9090 ... Done

- Generate config grafana -> 172.21.117.108:3000 ... Done

+ Init monitor configs

- Generate config node_exporter -> 172.21.117.108 ... Done

- Generate config blackbox_exporter -> 172.21.117.108 ... Done

Enabling component pd

Enabling instance 172.21.117.108:2379

Failed to enable unit: Unit file pd-2379.service does not exist.

Error: failed to enable/disable pd: failed to enable: 172.21.117.108 pd-2379.service, please check the instance's log(/data/tidb/deploy/pd-2379/log) for more detail.: executor.ssh.execute_failed: Failed to execute command over SSH for 'tidb@172.21.117.108:22' {ssh_stderr: Failed to enable unit: Unit file pd-2379.service does not exist.

, ssh_stdout: , ssh_command: export LANG=C; PATH=$PATH:/bin:/sbin:/usr/bin:/usr/sbin /usr/bin/sudo -H bash -c "systemctl daemon-reload && systemctl enable pd-2379.service"}, cause: Process exited with status 1