Note:

This topic has been translated from a Chinese forum by GPT and might contain errors.Original topic: TIKV实例上TOP获取到的每个线程的含义

[TiDB Usage Environment] Production Environment

[TiDB Version] V6.1.7

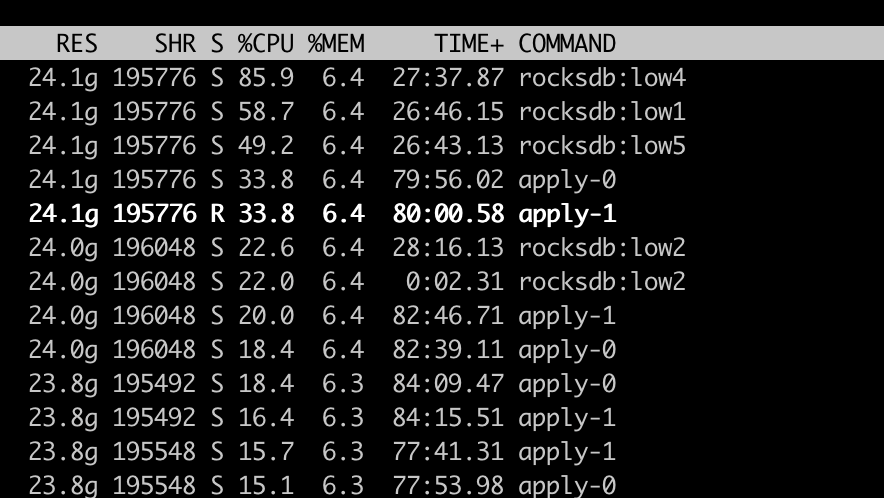

[Encountered Problem: Phenomenon and Impact] During the full data import stage online, executing top -H on the TIKV instance shows many rocksdb:low0~6 threads and apply-0~1 threads. Could you please explain what the rocksdb:low threads are doing? Currently, the data import speed is about 100,000 rows per second. Is it possible to optimize some configurations to increase the import speed? [Currently, hardware resources are very sufficient, with a single TIKV process using only around 4C of resources]

[Attachment: Screenshot/Log/Monitoring]